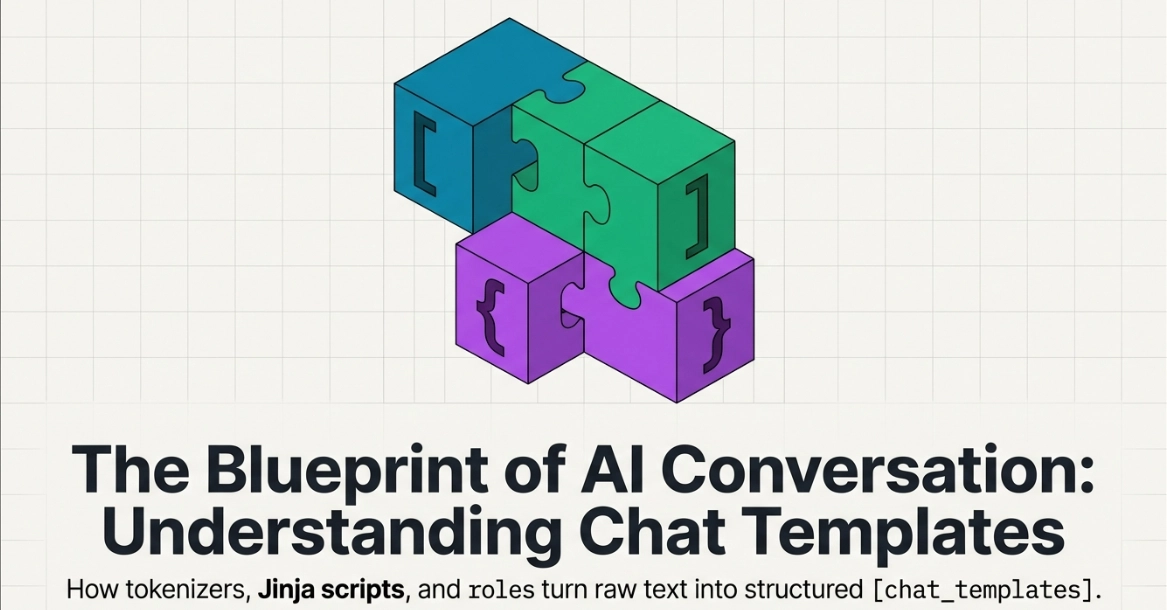

The topic Chat Templates can improve LM inferencing. is currently the subject of lively discussion — readers and analysts are keeping a close eye on developments.

This is taking place in a dynamic environment: companies’ decisions and competitors’ reactions can quickly change the picture.

We load the SmolLM3 tokenizer and see its tokenizer.chat_template. When the user asks “How far is the sun?”, the template produces a single prompt like:

The model then fills in the assistant’s reply. for example, the output might be: “The sun is about 93 million miles away.”

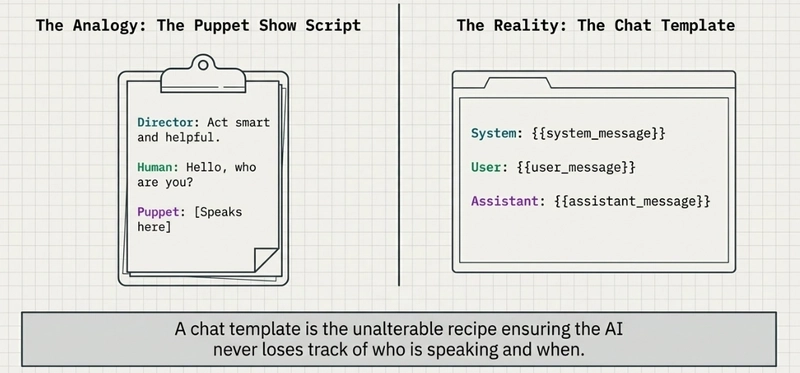

Imagine you have a talking robot and you want it to know who’s talking and what to say. A chat template is like a script or recipe that tells the robot how to set up each conversation.

Every time someone asks something, the computer fills in the blanks, and the puppet (the AI) knows exactly when to speak.

This way, the conversation always follows the same “recipe” or “script,” so the AI doesn’t get confused about what to do. This helps even small models (like SmolLM3) understand the pattern of a conversation.

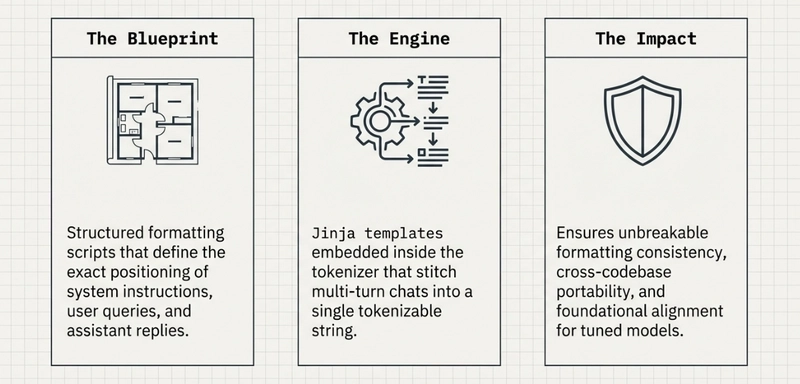

In other words, a chat template defines the format of the prompt. for example, a template might say:

Here, {{system_message}} is a placeholder for any initial instruction, {{user_message}} is where the user’s input goes, and {{assistant_message}} is where the model should reply. The tokenizer takes this template and the conversation data, fills in the placeholders, and then tokenizes the resulting string. Hugging Face’s Transformers library handles this automatically, so developers don’t have to manually concatenate strings or add special tokens.

Placeholders: These are like blanks in the script. Common placeholders are {{user}}, {{assistant}}, and sometimes {{system}} or others. When you run a conversation, the actual text from the user or previous assistant message is plugged into these placeholders. for example, if {{user}} was “What is 2+2?”, the template might produce something like “User: What is 2+2?” in the final prompt.

Roles: Templates usually distinguish who is speaking. The most common roles are:

Some templates might also include Function/Tool messages (for advanced uses), but basic chat templates focus on system/user/assistant.

`, if needed) and converts it to IDs. for example, if the system message is “Solve math problems,” and the user asks “2+2?”, the filled prompt might be:

These features can improve accuracy and safety because the model sees prompts exactly as the trainers intended. As Hugging Face puts it, chat templates are the foundation of instruction tuning, giving a stable format.

In summary, chat templates greatly simplify formatting, but you must still handle safety (e.g. sanitize inputs), manage long conversations, and ensure the template itself is correct for your use case.

Templates let you quickly answer FAQs or store snippets for re-use.

Are you sure you want to hide this comment? It will become hidden in your post, but will still be visible via the comment’s permalink.

For further actions, you may consider blocking this person and/or reporting abuse

Thank you to our Diamond Sponsors for supporting the DEV Community

Google AI is the official AI Model and Platform Partner of DEV

DEV Community — A space to discuss and keep up software development and manage your software career

Built on Forem — the open source software that powers DEV and other inclusive communities.

We’re a place where coders share, stay up-to-date and grow their careers.