The topic Intel and SambaNova team up on heterogenous AI inference platform — different… is currently the subject of lively discussion — readers and analysts are keeping a close eye on developments.

This is taking place in a dynamic environment: companies’ decisions and competitors’ reactions can quickly change the picture.

Inference platform can take advantage of Intel Xeon 6 CPUs, SambaNova SN50 RDUs, and Nvidia GPUs

When you purchase through links on our site, we may earn an affiliate commission. Here’s how it works.

Get Tom’s Hardware’s best news and in-depth reviews, straight to your inbox.

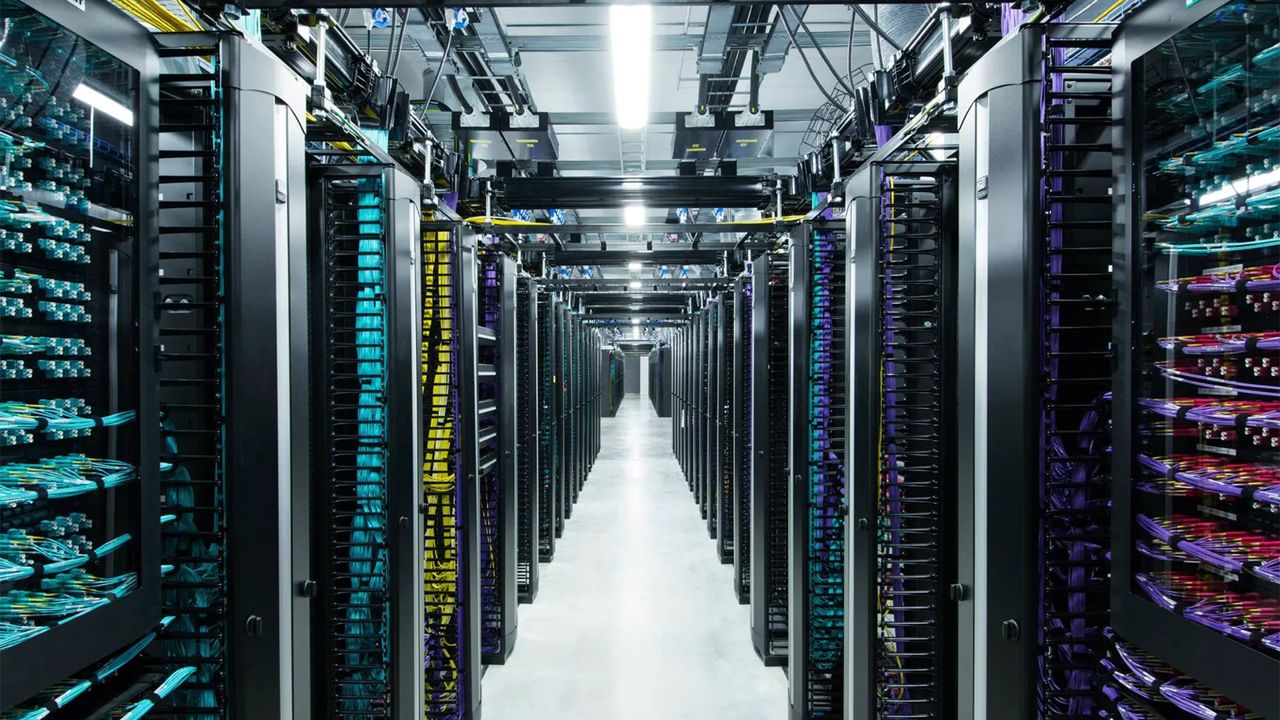

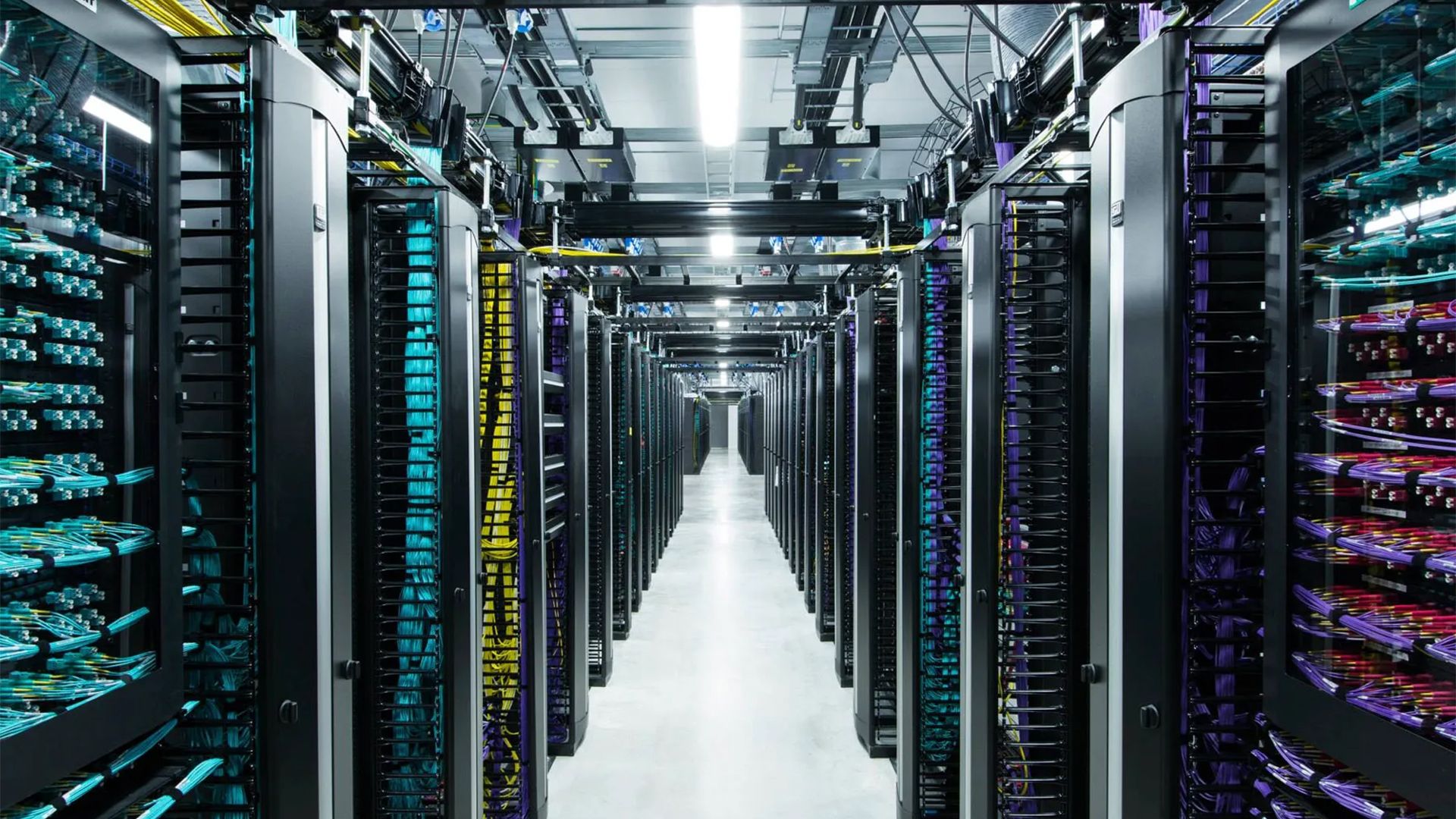

Intel and SambaNova on Wednesday announced their joint production-ready heterogeneous inference architecture that relies on AI accelerators or GPUs for prefill, SambaNova reconfigurable dataflow units (RDUs) SN50 for decode, and Xeon 6 processors for agentic tools and system orchestration. The platform is designed to address as broad a set of workloads as possible to siphon some of the market share away from Nvidia and other emerging players.

The heterogeneous inference platform by Intel and SambaNova separates inference into distinct stages handled by different silicon: It uses AI GPUs or AI accelerators for ingesting long prompts and building key-value caches; SambaNova’s SN50 RDU for decoding and generating tokens; and Xeon 6 processors for running agent-related operations (e.g., compiling and executing code and validating outputs) as well as coordinating and distributing workloads across hardware. Splitting prefill, decode, and token generation stages is similar to Nvidia’s approach to its Rubin platform, which is based on the Rubin CPX and heavy-duty Rubin GPU with HBM4 memory — with the obvious difference that the Rubin CPX is not coming to market. But, more importantly for Intel, the new platform will rely on its Xeon 6 processors — not on competing offerings.

The solution is scheduled to be available in the second half of 2026 to enterprises, cloud operators, and sovereign AI programs seeking scalable inference platforms in general and coding agents, and other agentic workloads in particular, completely in-house.according to the data SambaNova’s internal data, Xeon 6 achieves over 50% faster LLVM compilation compared to Arm-based server CPUs, and delivers up to 70% higher performance in vector database workloads, relative to competing x86 processors — namely, AMD EPYC. These gains are intended to shorten end-to-end development cycles for coding agents and similar applications, the two companies claim.Perhaps the biggest advantage of the joint production-ready heterogeneous inference architecture is that SambaNova SN50 and Xeon-based servers are drop-in compatible with data centers that can handle 30kW — which is the vast majority of enterprise data centers. “The data center software ecosystem is built on x86, and it runs on Xeon — providing a mature, proven foundation that developers, enterprises, and cloud providers rely on at scale,” said Kevork Kechichian, Executive Vice President and General Manager of the Data Center Group (DCG) at Intel Corporation. “Workloads of the future will require a heterogeneous mix of computing, and this collaboration with SambaNova delivers a cost‑efficient, high‑performance inference architecture designed to meet customer needs at scale — powered by Xeon 6.”

Follow Tom’s Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

Get Tom’s Hardware’s best news and in-depth reviews, straight to your inbox.

Anton Shilov is a contributing writer at Tom’s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends.